OneIE: A Joint Neural Model for Information Extraction with Global Features

Ying Lin, Heng Ji, Fei Huang, Lingfei Wu

Contact: hengji@illinois.edu, yinglin8@illinois.edu

Please email Ying Lin if you experience any technical issues using our software or need further information.

About

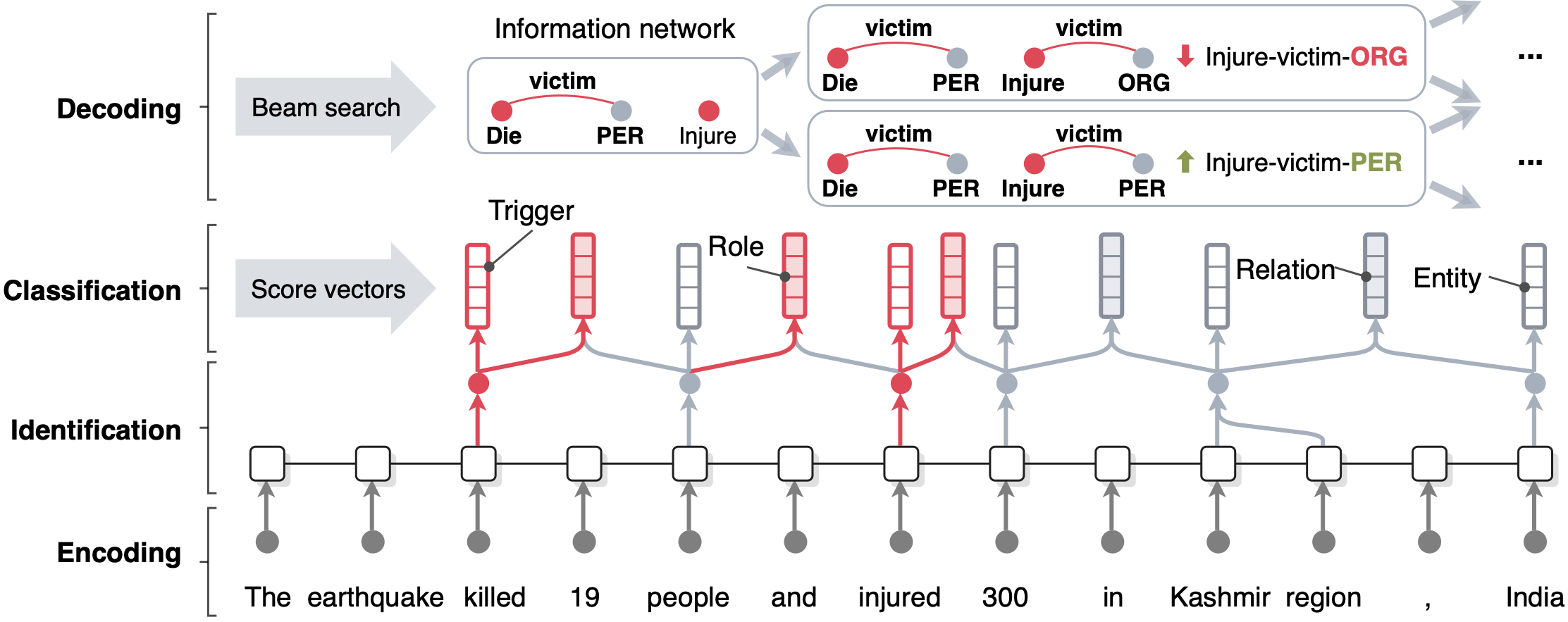

Given a sentence, our OneIE framework aims to extract an information network representation, where entity mentions and event triggers are represented as nodes, and relations and event-argument links are represented as edges. In other words, we jointly perform entity, relation, and event extraction within a unified framework.

Tasks

- Entity Extraction aims to identify entity mentions in text and classify them into pre-defined entity types. For example, "Kashmir region" should be recognized as a Location (loc) mention. A mention can be a name, nominal, or pronoun.

- Relation Extraction is the task of assigning a relation type to a pair of entity mentions. For example, there is a part-whole relation between "Kashmir region" and "India".

- Event Extraction entails identifying event triggers (the words or phrases that most clearly express event occurrences) and their arguments (the words or phrases for participants in those events) in unstructured texts and classifying these phrases, respectively, for their types and roles. An argument can be an entity, time expression, or value (e.g., money, job-title, crime). For example, the word "injured" triggers an injure event and "300" plays as the victim argument.

Download

OneIE can be downloaded via the link below.

OneIE Models

train.py.

| Training Data | Language | Version | Description | Link |

|---|---|---|---|---|

| ACE2005, ERE | English, Spanish, Chinese | v0.4.8 |

- Added the training script. - Added pre-processing scripts. - [0.4.2] Fixed bugs in preprocessing/process_dygiepp.py and util.py.- [0.4.3] Updated preprocessing/process_ace.py and README.md.- [0.4.3] Added document id lists for datasets used in the paper in resource/splits- [0.4.4] Updated preprocessing/process_ere.py. - [0.4.5] Improved preprocessing scripts. - [0.4.6] Fixed a minor bug. - [0.4.7] Changed BertTokenizerFast and RobertaTokenizerFast back to BertTokenizer and RobertaTokenizer. - [0.4.8] Fixed an issue in local graph generation. |

Software, English Model, Chinese Model, Spanish Model |

| ACE2005, ERE | English, Spanish, Chinese | v0.3.4 | - Support plain text format input. - We added a Spanish model trained on Spanish and English ERE data (LDC2015E29, LDC2015E68, LDC2015E78, and LDC2015E107). - Fixed a few errors in README. - We added a Chinese model trained on Chinese and English ACE data. - Fixed word tokenization and sentence tokenization for Chinese. |

Software, English Model, Chinese Model, Spanish Model |

| ACE2005 | English | v0.2 | - We trained a new model on cleaned training data. - Support cold-start format output. |

Software & Model |

| ACE2005 | English | v0.1 | - OneIE v0.1 supports 7 coarse-grained entity types, 6 coarse-grained relation types, and 33 event types. - Support relation directions. - Support single- and multi-token event triggers. - Support LTF (Logical Text Format) format input. |

Software & Model |

Acknowledgement

This research is based upon work supported in part by U.S. DARPA KAIROS Program No. FA8750-19-2-1004, U.S. DARPA AIDA Program No. FA8750-18-2-0014, Air Force No. FA8650-17-C-7715, the Office of the Director of National Intelligence (ODNI), Intelligence Advanced Research Projects Activity (IARPA), via contract No. FA8650-17-C-9116. The views and conclusions contained herein are those of the authors and should not be interpreted as necessarily representing the official policies, either expressed or implied, of DARPA, ODNI, IARPA, or the U.S. Government. The U.S. Government is authorized to reproduce and distribute reprints for governmental purposes notwithstanding any copyright annotation therein.

References

Ying Lin, Heng Ji, Fei Huang, Lingfei Wu. 2020. A Joint Neural Model for Information Extraction with Global Features. Proceedings of The 58th Annual Meeting of the Association for Computational Linguistics.